research

My research is focused on the study of complex systems through a topological lens. These complex systems are often naturally characterized by a network. The interaction between the topological structure of the network and functional properties of the processes which exist on or are described by the network are often linked. An understanding of the interaction between the topology and function of complex systems provides a foothold for understanding their fundamental pieces, composing these pieces to create more complex behaviors, and augmenting the behavior of these systems for greater societal benefit.

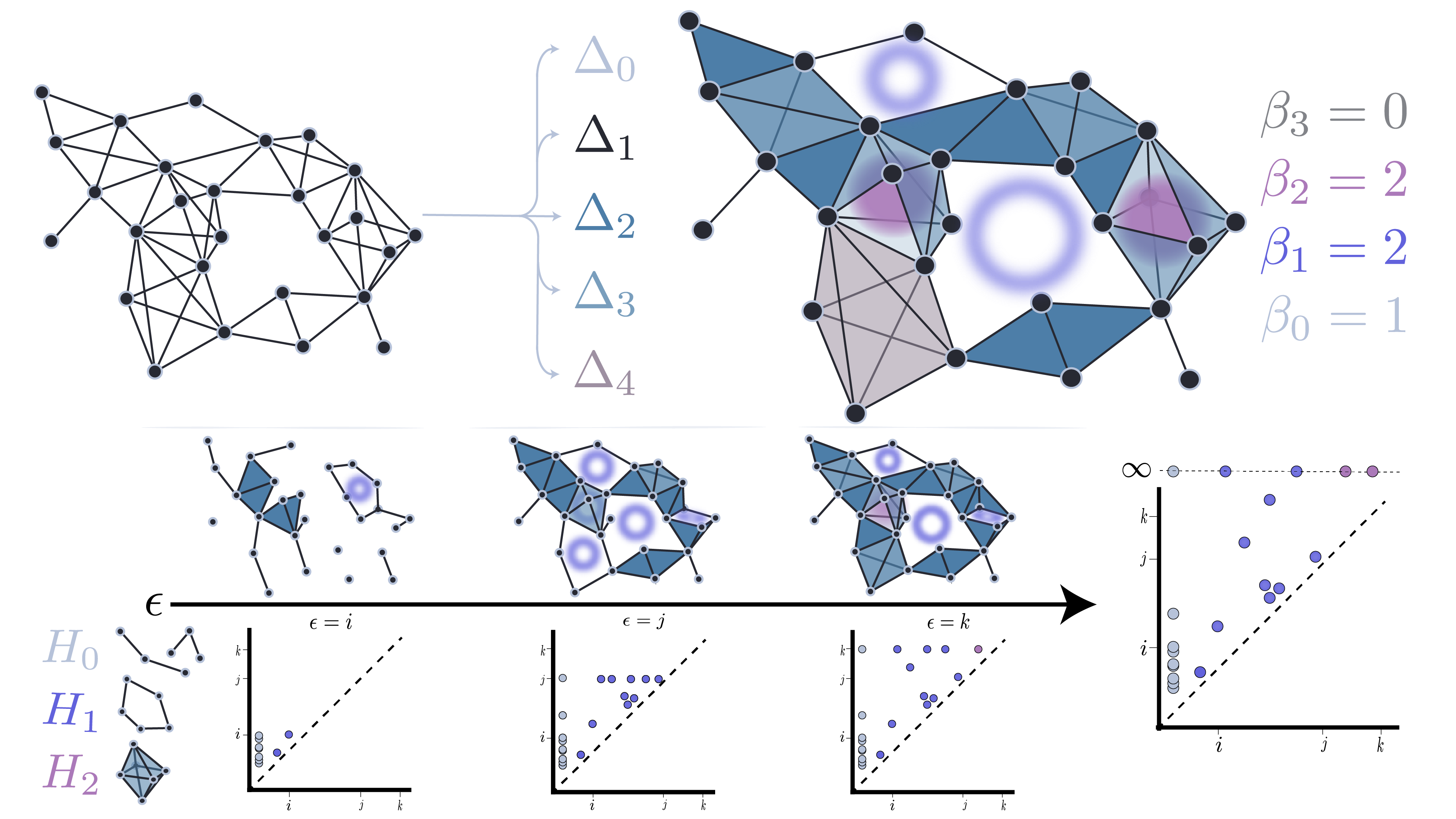

Applied Topology

Topological Data Analysis (TDA) is a relatively new applied-mathematical field whose methods are derived from the much older field of algebraic topology. TDA is concerned with characterizing the “shape” of spaces in an invariant manner. Work in the past two decades have brought theory from algebraic topology into the data sciences by discretizing traditional topological concepts. With this discretization comes powerful methods for tracking local-to-global structural properties within high-dimensional data. My research borrows many methods from TDA, including persistent homology, combinatorial Hodge theory, and the theory of cellular sheaves.

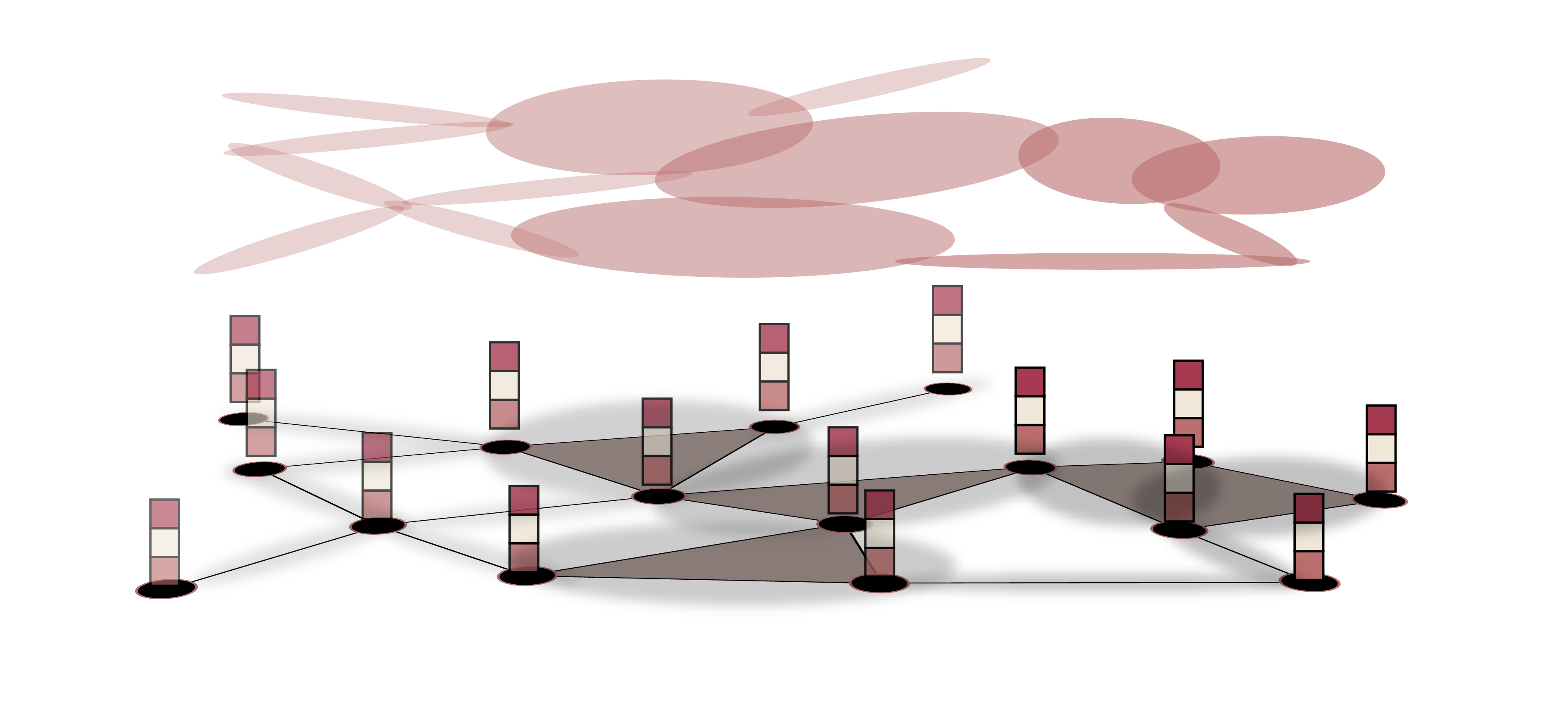

Network Science and Sociology

Computational social sciences like Economics or Sociology of Science frequently model their sociological systems of using network-theoretic tools. For example, production shocks to sectors of the economy may be approximated by the topology of the network of producer-supplier relationships. The propagation of knowledge in a community is often modeled as an abstract form diffusion across the community’s social network. Metrics of scientific and technological disruption are derived from the network of citations among scientific articles. Many models of social phenomena have a network structure at their core. My research seeks to improve and further expand the breadth of tractable social phenomena by applying a topological lens.

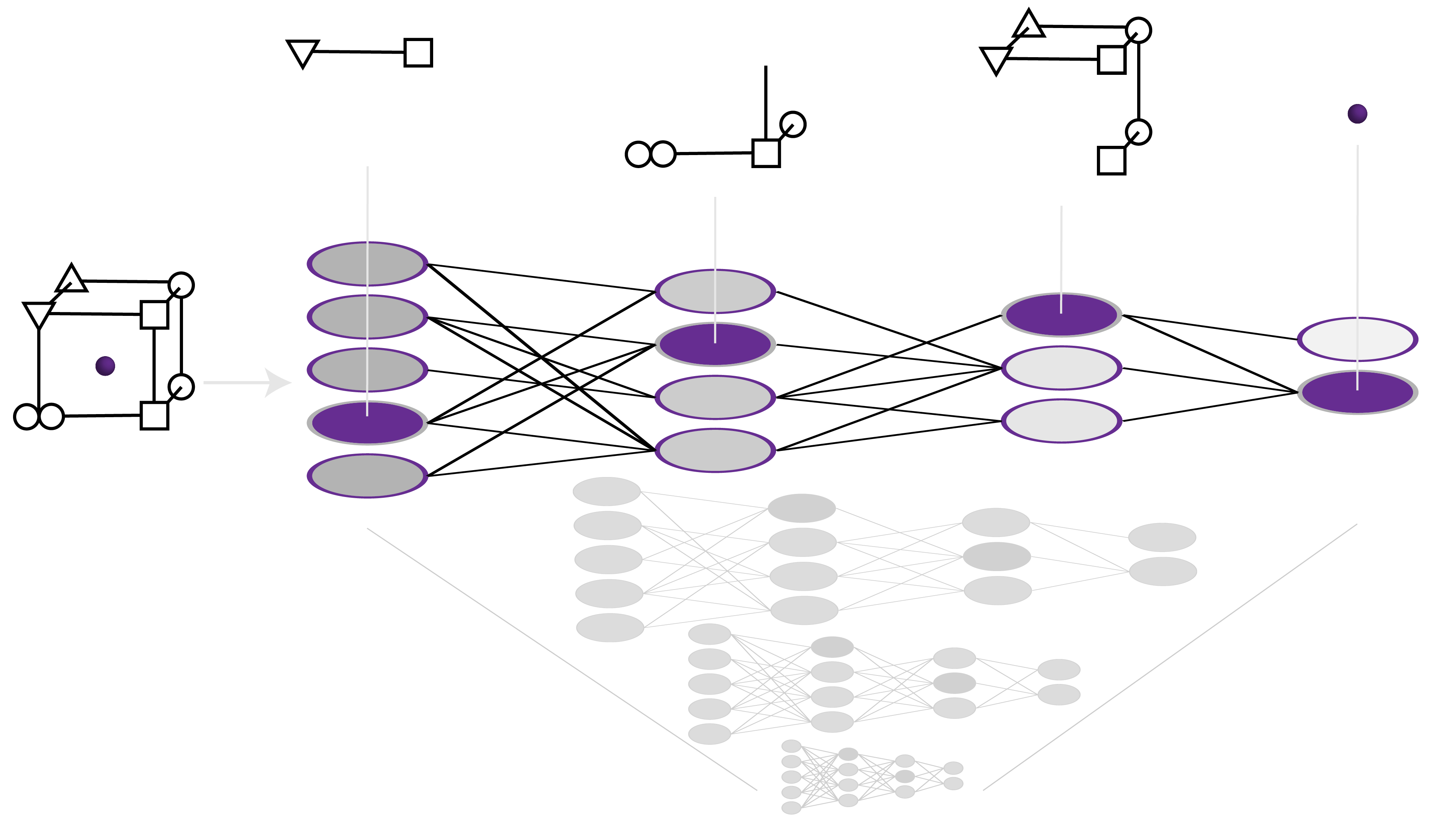

Structured Knowledge in Deep Learning

The connectivity structure of deep neural networks plays a dominant role in their function. However, due to the large and complicated nature of these networks, little is understood about how this structure gives rise to the function of these models. I am interested in how semantic knowledge about a domain is encoded in by these models, and how we can control or augment this knowledge in a way that is beneficial towards scientific or societal goals. The successful implicit biases embedded within networks due to their architectural specifications and initialization methods can provide insight into the high-dimensional structure present in the data used to train the network. An understanding of these biases can also help point us towards network architectures that are better suited for particular tasks that will train more quickly, require less data, and generalize to unseen data in more controllable and expected ways.